Automatic Regression Testing - The ART of feature testing

What is Automatic Regression Testing (ART)?

Automatic regression testing is a testing technique that involves the use of automated tools and scripts to retest software applications or systems to ensure that developed and tested functionalities work correctly after changes or enhancements have been made.

In the journey of product or feature development, various testing methods are employed prior to its release. Traditionally, much of this testing is conducted manually, which carries the inherent risk of overlooking certain test cases.

Why Automatic Regression Testing?

To increase the reliability and robustness of our products, it is vital to invest in a comprehensive testing strategy that goes beyond manual and automated testing. The concept of Automatic Regression Testing (ART) has emerged as a promising solution. In this article, we will explore what ART is, how it has proven beneficial, and the level of confidence it instills in the product development process.

Functional Programming

Functional programming is a style of programming where we focus on using functions to perform tasks, just like in mathematics.Functional programming involves two primary types of functions:

- Pure functions: functions that have no side effects; they consistently produce the same output for a given input, regardless of the external system or environment.

- Impure functions: functions that are influenced by external factors and yield varying results depending on the environment.

Automatic Regression Testing (ART) aims to test new product versions using live data. To accomplish this, it is necessary to record all impure functions. This ensures that the overall workflow remains unaffected and allows for comprehensive end-to-end testing.

Components of Automatic Regression Testing

1. Recording Block

2. Replay System

Let us deep dive into the to understand these components.

Recording Block

In a live environment, the number of test cases can be immense, encompassing all potential scenarios. During the recording process, each incoming request is captured, along with its associated storage entries, external calls (including all impure functions), and the corresponding response. This thorough documentation of the live environment allows for an extensive range of test cases.

In our use case the recorder will have

- The complete request

- DB entries

- Redis entries

- External calls

- The final response

An example for these entries would be

DB Entry - where clause, set clause, model name, result

Redis Entry - key, result

External API Call Entry: url, payload, method, result

Replay System

Once recorded, we have the data in persistent storage. Lets understand the replay process now.

By using the recorded file, a request can be constructed and the application is run in ART_MODE. In this mode, no actual storage calls or external calls are made. Instead, the data is compared with the recorded information, and an appropriate response is generated. If the data matches the recorded data, a correct response is provided; otherwise, the session is marked as failed.

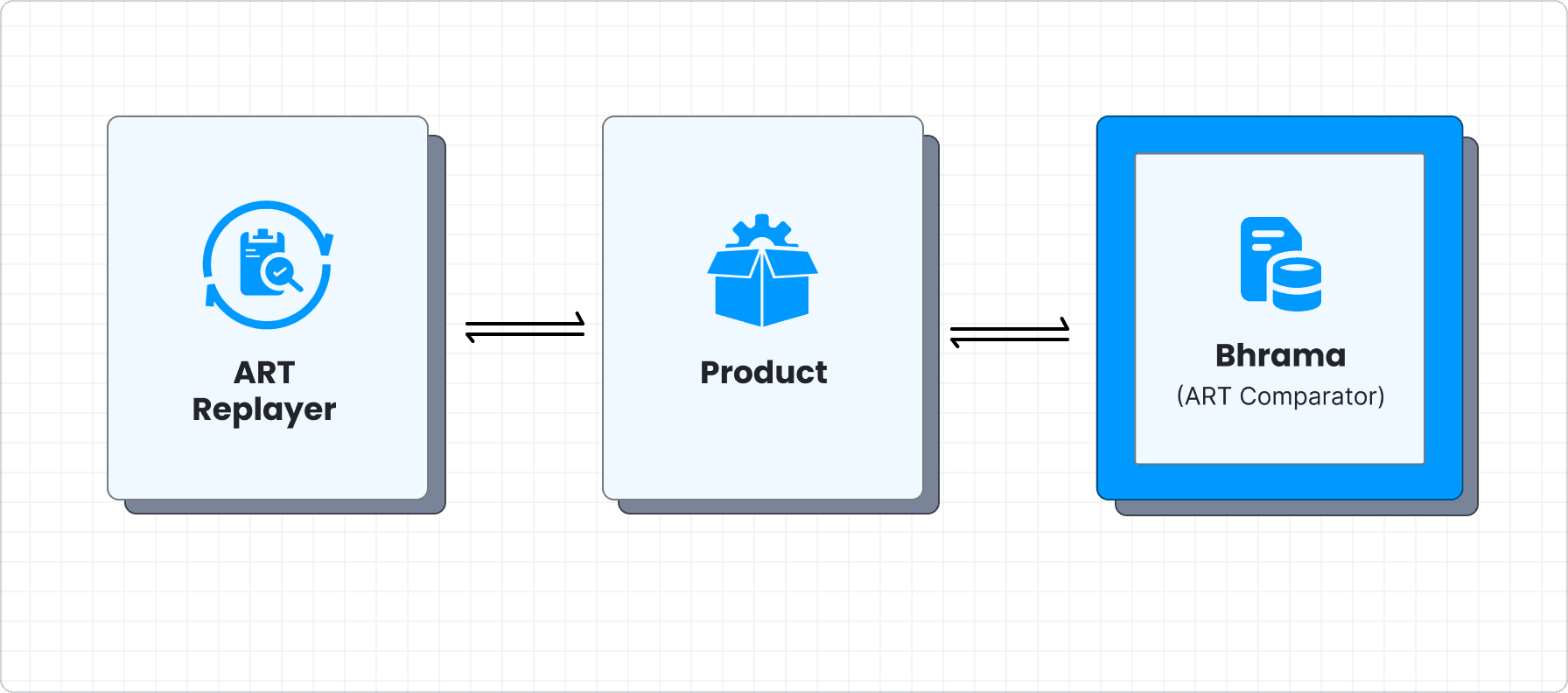

The ART Replayer constructs a request from the recorded data file and sends it to the application, which operates in ART mode. In this mode, the application sends data to the ART Comparator, rather than making actual database calls, Redis calls, or other external calls. The comparator then compares the incoming data with the recorded information.

For example, in the case of database calls, the ART Comparator analyzes find queries by matching the model name and the “where” clause. For update queries, it also compares the “set” clauses. Similarly, for Redis calls, the key is matched and the data is retrieved. When examining external calls, the comparator checks the URL, method, and payload. This efficient approach to application testing ensures that all relevant components are accurately assessed and validated.

Through this approach, the entire live data can be simulated, resulting in greater confidence in the testing process. A correct response is generated only if the data matches; otherwise, an error is sent as the response, and the session is added to the list of failed sessions. But what if you want to proceed despite encountering an error mid-flow and see a consolidated list of errors?

This can be achieved by running the ART Comparator in lenient mode. In case any error is encountered, the comparator keeps track of it and provides a consolidated list of errors for each session. The ART Replayer then compares the final response with the recorded data, ensuring the workflow functions as intended.

Dashboard for Data Handling

Handling massive amounts of data from both failed and successful test sessions can be a daunting task. To address this challenge and ensure comprehensive coverage of all dimensions, a user-friendly dashboard has been created. This dashboard displays all dimensions and their combinations, against which ART was executed.

Moreover, the dashboard categorizes failed sessions by error type, streamlining the analysis process and facilitating rapid troubleshooting.

With the help of ART and the intuitive dashboard, developers can now confidently test their products using real-time data, leading to more reliable and robust outcomes.

Conclusion

In this blogpost, we highlighted important aspects of A/B testing and engineering at Juspay. This was just a flavor of what goes behind the scenes and how we ensure that our systems are built to be growth drivers for our merchants. We hope this was insightful into our ethos, and gave you a peek of what we do and how we collaborate together.

Ready to take your Payments to the next level? Drop us a message here.